Music creation is often described as an artistic process, but in many modern workflows it is also an operational problem. Content teams need more versions, shorter turnaround times, faster experimentation, and tighter alignment with changing formats. A creator publishing once a week can work one way. A team producing daily clips, ads, explainers, intros, trailers, and short campaigns has a completely different relationship with sound. In that context, an AI Music Generator becomes valuable for a very specific reason: it reduces the lag between needing music and having something usable enough to test.

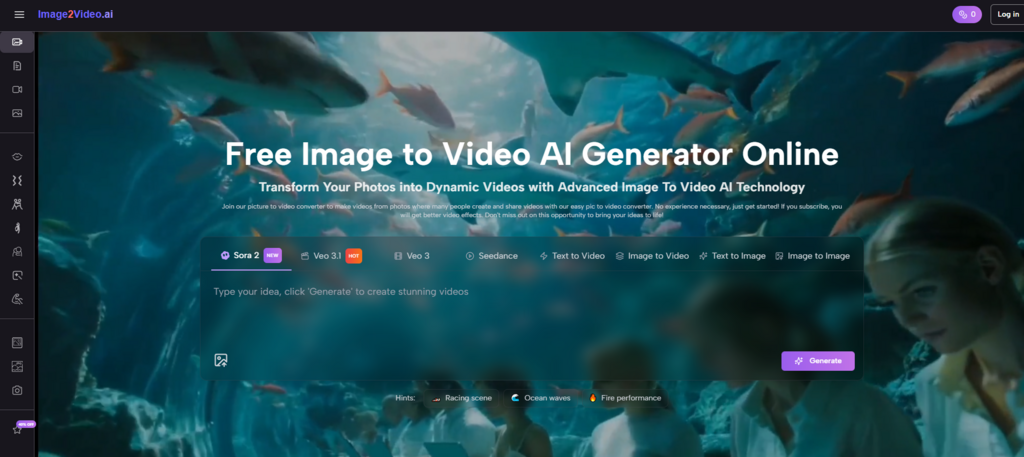

That is a strong lens for understanding ToMusic. The platform is often introduced through creative language, but its usefulness becomes even clearer when seen through production pressure. It takes textual direction, lyrics, or stylistic intent and turns that into audio drafts quickly enough to fit real publishing cycles. It is not only about artistic inspiration. It is also about maintaining momentum when content pipelines do not leave room for traditional music workflows.

This matters because many teams do not need one perfect song. They need multiple workable options, each aligned with a slightly different audience or message. They may need a more emotional version, a lighter version, a cleaner intro, or a tone that feels more commercial than cinematic. ToMusic’s structure makes that kind of variation easier to generate without moving into a full production environment every time.

Why Modern Content Work Changes What “Useful Music” Means

A great track in a streaming context is not the same as a useful track in a content operations context. For content teams, usefulness often means speed, relevance, flexibility, and repeatability.

A short-form video may only need twenty seconds of emotional clarity. A podcast intro needs identity without distraction. An ad variation may need the same broad tone with different emphasis. A trailer or promo piece may need stronger lift without becoming melodramatic. These are practical problems, and they require music workflows that support iteration rather than long composition cycles.

ToMusic works well inside this logic because it begins with text-driven direction. A user can define genre, mood, tempo, instrumentation, and vocal style instead of building everything manually from scratch.

Why Text-Led Generation Fits Fast Publishing

When the creative team already knows the feeling a piece of content needs, entering that feeling directly is often more efficient than trying to simulate it through manual construction.

Why Variation Matters More Than A Single Output

In content production, one version is rarely enough. Teams often need alternatives for testing, localization, or format-specific edits. A generation system that supports comparison is therefore more useful than one that only emphasizes novelty.

Why Operational Fit Is A Real Product Advantage

A tool that saves time without destroying creative intent has value far beyond hobby experimentation. It fits into the everyday rhythm of publishing work.

How ToMusic Supports A More Agile Music Workflow

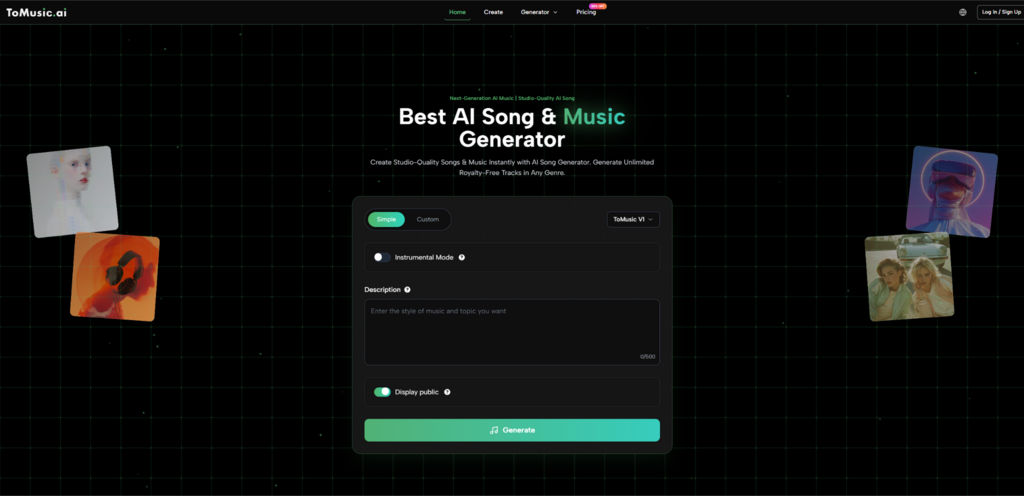

The platform provides access to four models, supports prompt-based or lyrics-based generation, and stores outputs in a cloud music library. Taken together, those features create a workflow that is more flexible than a single-model system.

From a content operations point of view, the product has three practical strengths. First, it accepts the kind of inputs teams naturally use: descriptive language. Second, it allows users to compare different output types across several models. Third, it stores results, making them easier to revisit and reuse.

That combination matters because content work is cumulative. A team that produces music-adjacent assets repeatedly benefits from patterns, memory, and faster decision cycles.

Why The Four Models Matter For Production Teams

ToMusic’s four-model structure is useful not only for creative nuance but also for operational decision-making.

| Model | Production Reading | Most Likely Operational Use |

| V1 | Faster and more streamlined | Quick-turn content drafts |

| V2 | Extended and tonally deep | Ambient or cinematic content beds |

| V3 | Richer harmonies and structure | More layered campaign music |

| V4 | Stronger vocals and control | Vocal-led branded pieces |

This setup makes the platform feel less like a generic generator and more like a menu of production strategies. Not every project needs the same musical behavior. Some need speed. Some need vocal presence. Some need a broader emotional canvas. The model architecture acknowledges that difference directly.

A Three-Step Workflow That Matches Busy Teams

The official process is simple enough to fit into real production schedules, which is part of its appeal.

Step 1. Pick The Model And Input Style

The team chooses the model based on the job and decides whether to start with a descriptive prompt or with custom lyrics. This sets the creative direction quickly.

Step 2. Define The Content Need In Musical Terms

At this stage, the prompt can describe mood, tempo, instrumentation, energy level, and voice characteristics. If lyrics are involved, they can be entered directly in custom mode.

Step 3. Generate, Compare, And Save

Once the track is created, it is stored in the music library, where different versions can be reviewed against the needs of the content piece or campaign.

Why Prompt Writing Resembles Creative Operations Briefing

In content environments, the most important internal document is often not a script or edit timeline. It is the brief. What should the asset feel like, communicate, support, or avoid? ToMusic fits naturally into that culture because prompt writing resembles briefing.

A content lead can specify that the track should feel optimistic but not childish, modern but not overly synthetic, promotional but not aggressive. That is the same language often used in campaign reviews or editorial direction. The platform converts that style of instruction into audio rather than forcing the team to translate everything into production jargon.

Why This Helps Mixed-Skill Teams

Not every person involved in a campaign understands music production. But many people can identify the mood, pacing, and emotional posture a piece should have. That makes music generation more collaborative.

Why It Also Helps With Feedback Cycles

When feedback is expressed in plain language, it becomes easier to turn revision notes into new prompts rather than vague abstract complaints.

How Lyrics-Based Generation Helps Brand-Led Projects

Branded music is a small but important corner of content production. Teams sometimes need a product theme, a campaign hook, a quick internal anthem, a parody structure, or a lyric-led social concept. That is where Lyrics to Music AI becomes especially useful.

Lyrics-based generation lets teams test whether a line or slogan actually works when sung. A phrase that looks catchy on a slide may feel awkward in performance. A chorus concept may seem too repetitive or too flat once placed inside music. Hearing those issues early saves time and prevents false confidence.

Why This Matters For Marketing Work

Brand messaging often feels stronger in text than in sound. Lyrics-based generation provides a reality check before more resources are committed.

Why It Also Supports Creative Brainstorming

Even if the generated version is not the final deliverable, it can help teams evaluate whether the concept deserves further development.

Why Non-Musical Teams Benefit From This Most

Many marketers and editors do not need finished music as much as they need rapid evidence. Lyrics-based generation offers that evidence.

Why Saved Outputs Are Operationally Important

ToMusic stores generations in a music library with associated titles, descriptions, lyrics, and parameters. This matters because content production depends on retrieval almost as much as creation.

A good result is far more valuable when it can be found later, compared with newer versions, or reused as a tonal reference. Without storage and context, teams end up recreating ideas from memory or losing promising directions entirely.

Why The Library Supports Better Review

Stakeholders can compare alternatives with more clarity when versions remain organized. This improves feedback quality.

Why Reusability Increases Long-Term Value

A saved track may become a reference point for future campaigns, intros, or recurring video formats. That makes the platform more useful over time.

Why Version Memory Reduces Waste

Teams often spend unnecessary time trying to rediscover a direction they already found once. Saved outputs reduce that problem.

What The Platform Can Change For Different Roles

ToMusic affects different users in different ways, which is one reason it scales well across content environments.

Editors Gain Faster Mood Matching

They can search for a tonal fit without waiting for a separate composition cycle.

Marketers Gain More Campaign Variants

They can test different emotional directions for the same message.

Writers Gain Early Audio Feedback

They can hear whether lines feel too dense, too plain, or surprisingly effective in musical form.

Founders Gain Prototype Speed

They can turn a brand or product idea into something audible enough to pitch, test, or refine.

Where The Limits Still Matter

No operational tool should be mistaken for a guaranteed final-answer machine. Output quality still depends on input quality. A vague brief can produce a vague track. A strong first draft may still need several additional generations before it fits the actual content perfectly. Music intended for high-profile brand use may still require human editorial filtering.

But those limitations do not undermine the platform’s utility. They simply clarify what kind of utility it offers. ToMusic is strongest as a speed-and-variation layer that sits before final selection, not as a replacement for every downstream creative decision.

Why Multiple Generations Are Often A Strength

In content workflows, variation is not a sign that the system failed. Variation is often exactly what the team needs in order to choose wisely.

Why Human Review Still Decides The Winner

The platform can create options quickly. It cannot decide which option best matches audience intent, brand tone, or editorial context. That remains a human choice.

Why This Division Feels Healthy

Operationally, the best tools are the ones that accelerate decisions without pretending to remove judgment entirely.

Why ToMusic Belongs In A Modern Content Stack

The clearest way to understand ToMusic is to stop seeing it only as a consumer music toy and start seeing it as a practical content-layer tool. It accepts briefs in natural language, generates multiple musical possibilities, supports lyric-led experiments, and stores outputs in a way that makes iteration easier. Those are not abstract advantages. They map directly to the everyday needs of content production.

For teams working under time pressure, the real win is not instant perfection. It is faster clarity. A platform that helps people hear the shape of a campaign, a scene, a message, or a concept before committing to a final path already provides meaningful leverage. That is why ToMusic fits the real pace of modern publishing so well. It helps creative work move at the speed content now demands, while still leaving room for taste, revision, and choice.