The promise of modern creative software is often described in huge terms, but most working creators do not need huge promises. They need something simpler: a way to make existing visuals communicate more clearly in environments where still images alone can feel easy to scroll past. That is why Image to Video AI deserves a closer look. Its appeal is not just that it can animate an image. Its deeper appeal is that it offers a compact method for turning a finished visual into a short moving asset without forcing the user into a full editing workflow from the start.

That distinction matters. Many teams are not trying to become video studios overnight. They already have visuals that work: portraits, product photos, illustrations, brand images, old photographs, or campaign art. Their problem is that modern platforms increasingly reward motion, not stillness. The gap between “we have a good image” and “we need a usable short video” used to require either more time, more tools, or more specialized labor. A web-based system that keeps the process narrow and direct changes that equation. In my view, that is where the real usefulness begins.

Why Motion Now Matters Even For Static Assets

A strong still image still matters, but online communication has changed. In social feeds, landing pages, advertising units, and creator portfolios, movement tends to earn a second glance. That does not mean motion is always better than stillness. It means motion often buys attention long enough for the original visual idea to land.

This is especially important for teams that already invest in design. A well-composed image contains deliberate choices about framing, subject emphasis, color, and mood. Recreating those choices inside a separate video process can be inefficient. A tool built around image-to-video generation attempts to preserve what is already working while adding temporal energy.

Attention Is Part Of The Modern Design Brief

Design used to end when the image was approved. Now the question often continues: how does this asset behave once it enters a platform dominated by motion? A static poster in a feed competes with clips, loops, and animated fragments. Even subtle movement can change how long a viewer stays with the content.

The Best Motion Often Starts With Restraint

One mistake people make with emerging AI tools is expecting maximum transformation. But in practical creative work, the most effective result is often minimal. A slight camera move, environmental drift, or small subject motion can preserve the strength of the original image while making it feel more present. In my observation, that balance is where these tools become genuinely useful rather than merely impressive.

How The Platform’s Workflow Reflects That Goal

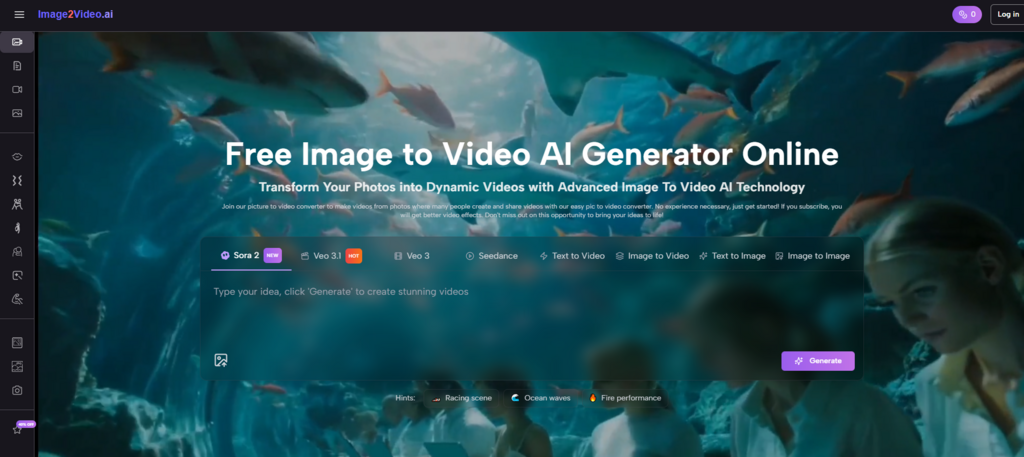

The official flow presented on the site stays intentionally simple. That simplicity is not a weakness. It is part of the product philosophy.

Step One Uses A Ready-Made Visual Asset

The process begins by uploading an image. This immediately tells you something about the target user. The platform assumes that a lot of creative value already exists in static form. The user does not need to prepare a full storyboard or edit a sequence by hand. They begin with a single image file and treat it as the base layer for motion.

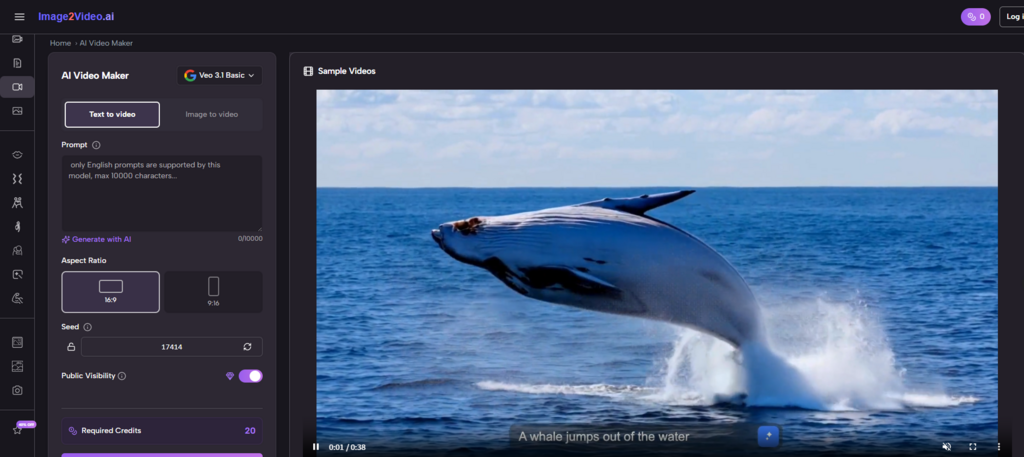

Step Two Converts Intent Into A Prompt

After the image is uploaded, the user writes a text instruction describing how the scene should move or feel. This is where the product becomes more than a one-click animation effect. Instead of applying generic movement, it asks for creative intent.

Language Becomes A Motion Interface

That is an important shift in interface design. Traditional motion tools are often built around timelines, keyframes, and parameter control. Here, the entry point is language. The user can express mood, movement, pacing, and camera behavior in a form that feels closer to directing than editing.

Step Three Generates A Short Motion Result

The platform then processes the image and prompt into a short video output. The official framing suggests a concise clip rather than long-form video production. That sets the right expectation. The tool is designed for short generative output, which aligns with many present-day use cases such as social clips, ad fragments, visual teasers, and animated previews.

Step Four Produces A Downloadable Video Asset

Once the generation is finished, the output can be downloaded and used elsewhere. This is important because it reveals the platform’s real role in a workflow. It is not trying to do everything. It creates a motion asset that can be repurposed for publishing, editing, presentation, or promotion.

How This Fits Different Types Of Creative Work

The value of a tool becomes clearer when you stop asking whether it is universally powerful and start asking where it fits best. In that sense, image-based video generation serves several very concrete roles.

It Helps Marketers Extend Existing Campaign Assets

Marketing teams often already have approved visuals but need motion variations for paid ads, landing pages, and social media. Instead of rebuilding everything from scratch, they can use a short Photo to Video generation workflow to extend the life of those assets.

It Helps Creators Prototype Faster

An illustrator or designer may want to see whether a static composition gains emotional strength when it moves. A short AI-generated clip becomes a fast prototype. It may not be the final version, but it gives immediate feedback on whether the idea works in motion.

It Helps Small Teams Work More Flexibly

Not every team has a dedicated motion designer for every task. A lightweight tool that turns images into short video clips can help teams test content directions before deciding whether more production effort is justified.

Why This Workflow Feels Different From Traditional Editing

It is easy to misunderstand tools like this by comparing them unfairly with full editing suites. A better comparison is to ask what kind of friction they remove.

| Creative Question | Traditional Method | AI Image-To-Video Method |

| How do we add motion to this image? | Build motion manually in editing software | Upload image and describe movement |

| How fast can we test an idea? | Often slower due to setup | Faster first-pass experimentation |

| What skill is most important? | Software technique and editing control | Prompt clarity and visual judgment |

| What kind of output works best? | Detailed, highly controlled sequences | Short, concept-driven motion clips |

| Where is it most useful? | Polished production and refinement | Early exploration and reusable content |

This comparison matters because it sets expectations correctly. The platform is not strongest where frame-level control matters most. It is strongest where a user wants to move from asset to animated concept with less friction.

How Model Choice Changes User Expectations

One notable aspect of the platform is that it presents itself less like a single-purpose animation toy and more like a gateway into multiple generative models. That matters because it changes the user’s relationship to the product. Instead of assuming one fixed output style, the user can think in terms of model behavior, generation quality, and different motion characteristics.

The Interface Hides Backend Complexity

From the outside, the workflow looks simple. But simplicity at the interface level often means complexity is being handled underneath. The user sees upload, prompt, and generate. Behind that experience is model routing, processing, and output handling. That kind of abstraction is useful because most users want the result, not the infrastructure.

Aggregation Can Be A Product Strength

A platform that brings several generation options into one place can become more useful than a tool tied to only one model. In practical use, people rarely care about a model name by itself. They care whether the result feels stable, expressive, and worth keeping. A multi-model structure can make that experimentation easier.

Why Photo To Video Feels More Important Than It Sounds

The phrase Photo to Video may sound technical, but it points to a larger cultural shift. It suggests that photographs and designed images are no longer fixed endpoints. They are flexible source material. That changes how creators think about planning.

A photographer can now imagine a still image as a potential motion asset. A brand team can design campaign visuals with later animation in mind. A teacher can treat archived visual material as something that might become a more immersive presentation. A social creator can turn a strong cover image into a teaser clip without building a full production chain.

That flexibility is important because it reduces waste in content creation. Instead of creating entirely separate assets for every channel, a single image can branch into multiple formats.

Where The Output Feels Most Convincing

In my experience with AI creative tools, success often depends less on whether the model is “powerful” in abstract terms and more on whether the input-output relationship feels natural.

Portraits Work Best With Controlled Motion

A portrait often becomes stronger when motion remains subtle. Slight subject movement, depth, or camera push can preserve realism better than exaggerated action.

Products Benefit From Directional Clarity

For product visuals, the goal is usually not drama. It is emphasis. A clear sense of focus, form, and atmosphere often works better than highly complex motion.

Illustrations Can Gain Emotional Range

Illustration-based content can sometimes tolerate more stylized motion because the source material already invites interpretation. That makes this category especially interesting for concept artists and storytellers.

Mood Consistency Matters Across All Cases

Whether the source is photographic or illustrative, the most believable result is usually the one that respects the original mood. Motion should reveal what is already implied, not contradict it.

What Users Should Understand Before Relying On It

A credible view of the platform has to include its limits. AI generation reduces labor in some parts of the process, but it does not remove the need for selection and judgment.

The First Result Is Not Always The Final One

Users should expect iteration. Prompt changes can meaningfully affect the result, and some generations will align with the source image more convincingly than others.

Short Video Length Shapes The Creative Role

Because the platform is designed around short output, it is especially useful for snippets, loops, teasers, previews, and lightweight campaign assets. It is less about sustained long-form storytelling and more about concentrated visual impact.

Good Inputs Still Matter A Great Deal

A weak source image rarely becomes excellent motion automatically. The tool works best when the original image already has strong composition, identifiable subject focus, and a clear visual mood.

Why This Matters For Future Creative Work

The larger significance of platforms like this is not that they make every creator a filmmaker. It is that they introduce a more fluid relationship between media forms. A still visual can now travel further before it reaches its limit. That changes planning, budgeting, and experimentation.

Designers may start creating images with motion potential in mind. Marketers may build campaigns around adaptable assets rather than separate media silos. Small teams may test more ideas because the cost of testing drops. None of this eliminates the value of high-end production. It simply widens the set of people who can explore motion as part of communication.

What This Tool Ultimately Helps People Do

At its best, the platform helps users make a more natural transition from visual concept to short moving expression. It does not ask them to master a full production environment first. It asks them to begin with an image they already understand and describe how that image should come alive.

That is a modest promise, but a useful one. In creative technology, modest promises often age better than exaggerated ones. A tool does not need to replace everything to matter. Sometimes it only needs to make one difficult step easier. In this case, the step is clear: taking a static visual that already has meaning and giving it enough motion to communicate in places where stillness alone may no longer be enough.