The modern digital landscape is characterized by an overwhelming volume of visual content, making it increasingly difficult for individual creators and brands to capture meaningful audience attention. Many storytellers face a significant hurdle: they possess high-quality static imagery but lack the technical expertise or massive budgets required for professional video animation. This gap often leads to missed engagement opportunities, as static posts are frequently bypassed in favor of dynamic, motion-based media. By leveraging the advanced synthesis capabilities of Seedance 2.0, creators can finally bridge this divide, turning singular portraits into fluid, high-fidelity video narratives that resonate with the fast-paced demands of social platforms.

Navigating The Evolution From Still Images To Fluid Narratives

In my observations of the generative media sector, the transition from 2D pixels to temporal video sequences represents one of the most challenging technical frontiers. Traditional animation requires a frame-by-frame understanding of physics, lighting, and anatomy—a process that is both time-consuming and prone to human error. The shift toward automated motion synthesis has fundamentally changed this dynamic, allowing for a more democratic approach to high-end visual production. Rather than spending days on a single sequence, creators are now able to experiment with complex movements in real-time, effectively lowering the barrier to entry for cinematic storytelling.

The psychological impact of motion cannot be understated in a marketing context. Human eyes are biologically wired to prioritize movement over stillness, a trait that digital marketers are increasingly exploiting to improve click-through rates. When a character in an advertisement transitions from a static pose to a graceful dance or a speaking role, the perceived value of the content increases significantly. This evolution is not merely about adding “effects” but about creating a sense of life and presence that a photograph alone cannot convey.

The Technical Foundation Of Consistent Character Identity Synthesis

One of the primary frustrations in early AI video generation was the lack of character consistency. In many legacy systems, a person’s facial features would morph or flicker between frames, destroying the illusion of reality. In my testing of current high-end models, there is a visible improvement in how identity is anchored. By utilizing deep-feature extraction, the system creates a “digital fingerprint” of the source character, ensuring that their likeness remains stable even during high-range motions like spinning or jumping. This stability is the cornerstone of professional-grade Seedance 2.0 AI Video, as it allows for believable brand representation.

The underlying architecture relies on sophisticated motion-conditioned adapters that interpret the geometry of the human form. It is not just about moving pixels; it is about understanding how fabric folds, how muscles shift, and how light interacts with skin during movement. This depth of understanding ensures that the generated output feels grounded in physical reality. While no system is perfect, the current trajectory suggests that the “uncanny valley” effect is rapidly diminishing, paving the way for seamless integration into mainstream media.

Measuring Performance Stability Across Diverse Lighting And Texture Profiles

Technical performance is often dictated by the complexity of the input material. In environments with harsh, contrasting shadows or intricate clothing textures like lace or sequins, the synthesis engine must work harder to maintain visual integrity. Based on my analysis of various output samples, the system performs exceptionally well when the source image has clear, unobstructed facial features and high-contrast boundaries between the subject and the background. This level of granular control over texture preservation is what separates professional tools from casual consumer filters.

However, it is important to note that environmental variables still play a role. For instance, extremely busy backgrounds can sometimes confuse the motion adapters, leading to minor artifacts in the peripheral frames. Understanding these technical nuances allows creators to optimize their source material—choosing simpler backgrounds when the focus is on complex character motion—thereby ensuring a higher success rate during the rendering process. This iterative learning process is a standard part of the modern AI creative workflow.

Strategic Implementation Of Automated Motion In Modern Brand Storytelling

The economic shift toward AI-assisted production is forcing agencies to rethink their creative strategies. Instead of allocating the majority of a budget to the technical labor of animation, funds can now be redirected toward higher-level concept development and multi-channel distribution. This shift empowers smaller creative teams to produce content that was previously reserved for large-scale studios. By integrating automated motion into a brand’s narrative, the storytelling becomes more agile, allowing for rapid responses to cultural trends and real-time audience feedback.

In my view, the most successful brands will be those that use these tools to enhance, rather than replace, human creativity. The tool provides the “engine” for motion, but the “soul” of the story remains a human responsibility. Whether it is a virtual influencer performing a trending dance or a digital host explaining a complex product, the goal is always to build a deeper connection with the viewer through visual expression.

Operational Guide For Creating Professional Grade Digital Content Sequences

To achieve the best results, the workflow must be handled with a focus on precision and official platform procedures. The current system is optimized for speed and user accessibility, requiring only a few deliberate actions to move from a concept to a final video file.

- Step 1: Preparation And Selection Of The Reference Portrait

The first step requires the user to provide a high-resolution image that serves as the visual anchor. This image should ideally be a front-facing or three-quarter view portrait to give the algorithm the most data regarding the subject’s facial structure and proportions.

- Step 2: Configuring The Motion Template Or Vocal Input

Once the image is uploaded, the user selects the desired output mode. For rhythmic content, one might choose from a library of pre-defined movements. For speech-based content, the user provides a text script or an audio file that the system will use to drive the lip-syncing and facial expressions.

- Step 3: Final Rendering And Post Production Review

After the parameters are locked, the cloud-based engine processes the synthesis. The user then reviews the output for any inconsistencies, and if satisfied, exports the video for final use. This entire process typically takes less than a minute, depending on the length and complexity of the sequence.

Critical Criteria For Selecting High Quality Reference Source Material

The quality of the source image is the single most important factor in the final video output. Images with low resolution or heavy digital noise tend to produce muddy video results, as the AI struggles to “invent” missing detail during the motion phase. Professional creators often use AI-upscaled photos as their starting point to ensure that the skin textures and eye details are sharp enough for the motion synthesis engine to track accurately across frames.

Furthermore, lighting consistency in the source image is vital. If one side of the face is in deep shadow while the other is brightly lit, the system may struggle to maintain the shadow’s shape during a 360-degree turn or a rapid movement. Selecting images with soft, even lighting—similar to a professional studio setup—will almost always result in a more realistic and stable video generation.

Economic Impact Of AI Video Synthesis On Creative Workflows

The democratization of video production tools has led to a significant reduction in the “cost-per-frame” for high-quality animation. This allows for a much higher volume of content production without a linear increase in costs. For digital marketers, this means the ability to A/B test different video styles, characters, and movements at a fraction of the traditional cost, leading to more data-driven and effective campaigns.

| Production Category | Conventional Manual Animation Method | Automated Synthesis Methodology |

| Initial Setup Time | High (Days/Weeks) | Low (Minutes) |

| Human Labor Required | Multiple Specialists | One Creative Director |

| Per Shot Consistency | Prone to human variance | Algorithmic identity locking |

| Scalability Potential | Limited by staff hours | Theoretically unlimited via cloud |

| Revision Speed | Slow and expensive | Near-instant iteration |

Beyond the immediate cost savings, the true value lies in the speed of innovation. Creators can now prototype an entire video campaign in a single afternoon. If a particular motion or character style is not resonating with the target demographic, it can be adjusted and re-rendered almost immediately. This level of agility is essential in an era where social media trends can emerge and disappear within a 48-hour window.

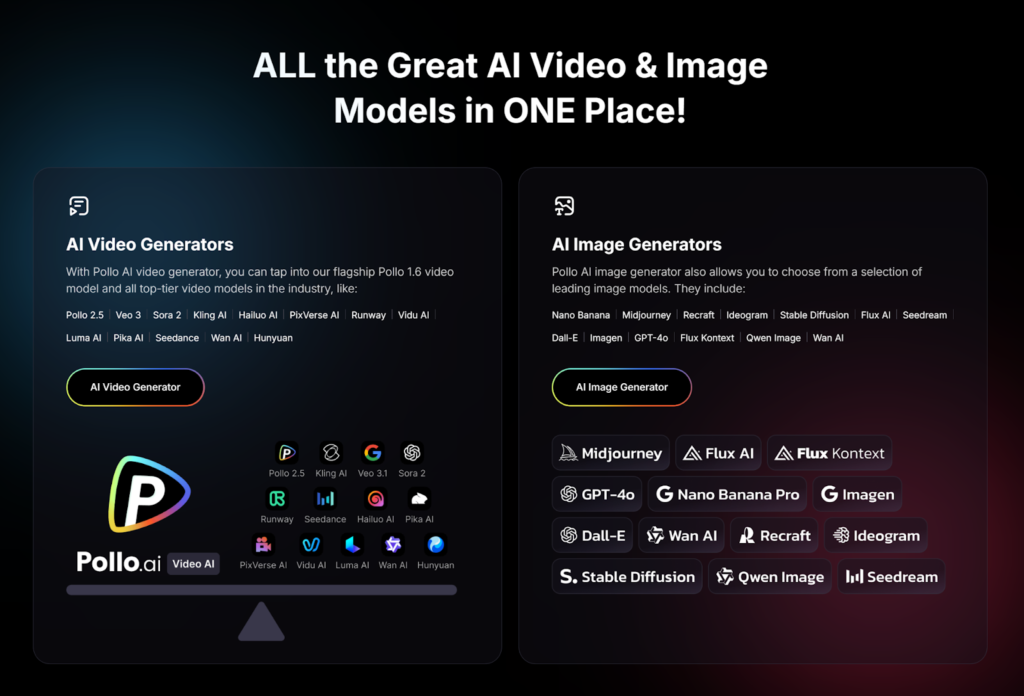

The future of this technology points toward even greater integration between different AI modalities. We are moving toward a reality where text-to-image, image-to-video, and voice synthesis are all part of a single, unified creative ecosystem. While limitations such as prompt sensitivity and occasional rendering glitches still exist, the constant updates to the underlying models ensure that these tools are becoming more robust every day. By staying at the forefront of these developments, creators can ensure that their work remains relevant, engaging, and visually stunning in an increasingly competitive digital world.