Domains of AI Glossary Class 9

Domains of AI |

Data Science |

Data |

Data Sources |

DataSets |

Information |

Data vs Information |

Types of Data |

Big Data |

Computer Vision |

Image Recognition |

CBIR |

NLP |

NLU |

NLC |

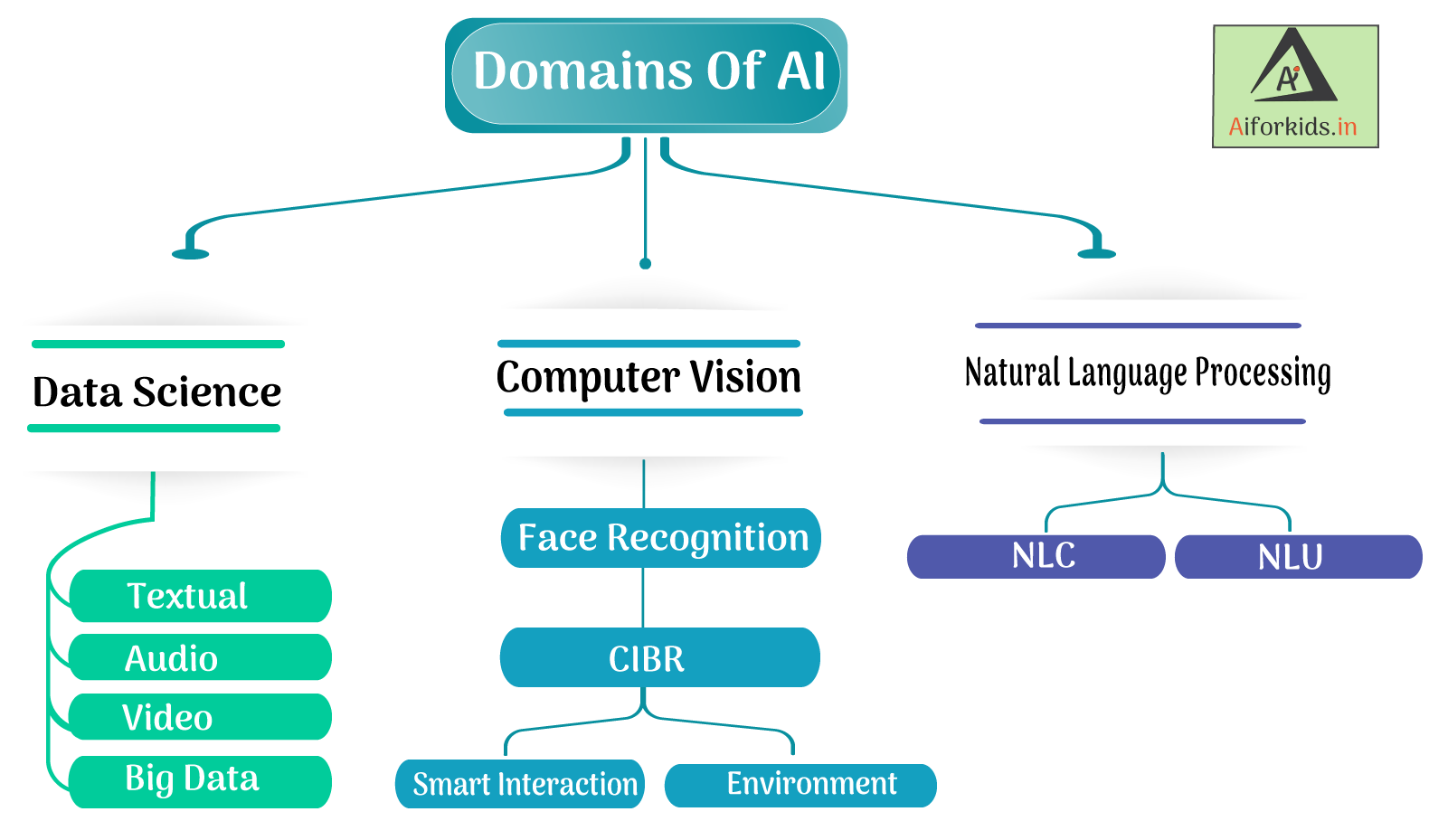

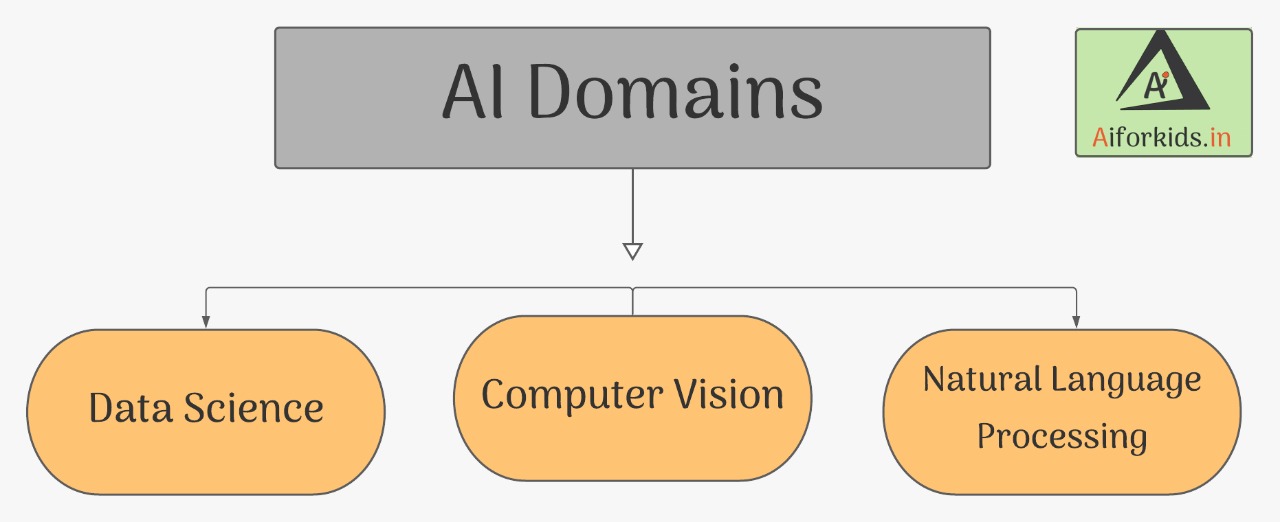

Domains of AI Overview

What are Domains of AI ?

Domains of AI refers to the main Branches of Artificial Intelligence for ex : Data, Computer Vision and Natural Language Processing

The Flowchart below is representing the 3 domains of Artificial Intelligence

Data Sciences ( Data )

Data Science is all about applying mathematical and statistical principles on data.

or

In simple words Data Science is the study of Data, This data can be of

3 types - Audio, Visual and Textual.

What is Data?

How we spend most of our time in this tech era, On Internet ? The whole Internet is based on data , you search for one result on Amazon and you get so many recommendations, How ?

The principle of recommendation Engine is implemented here, just for understanding we can think it like, allot of data is given to these recommendation engines and they analyze the patterns like in which category you were searching from last week and etc, and hence this will recommend you the desired products.

Data is a collection of raw facts which can be processed to make Information out of it. The data can be in the form of numbers, words, symbols

or even pictures for example:

| Numbers | 10, 20, 2006, 29, 06 etc |

| Words | Something, Learning, Thankyou etc |

| Symbols |

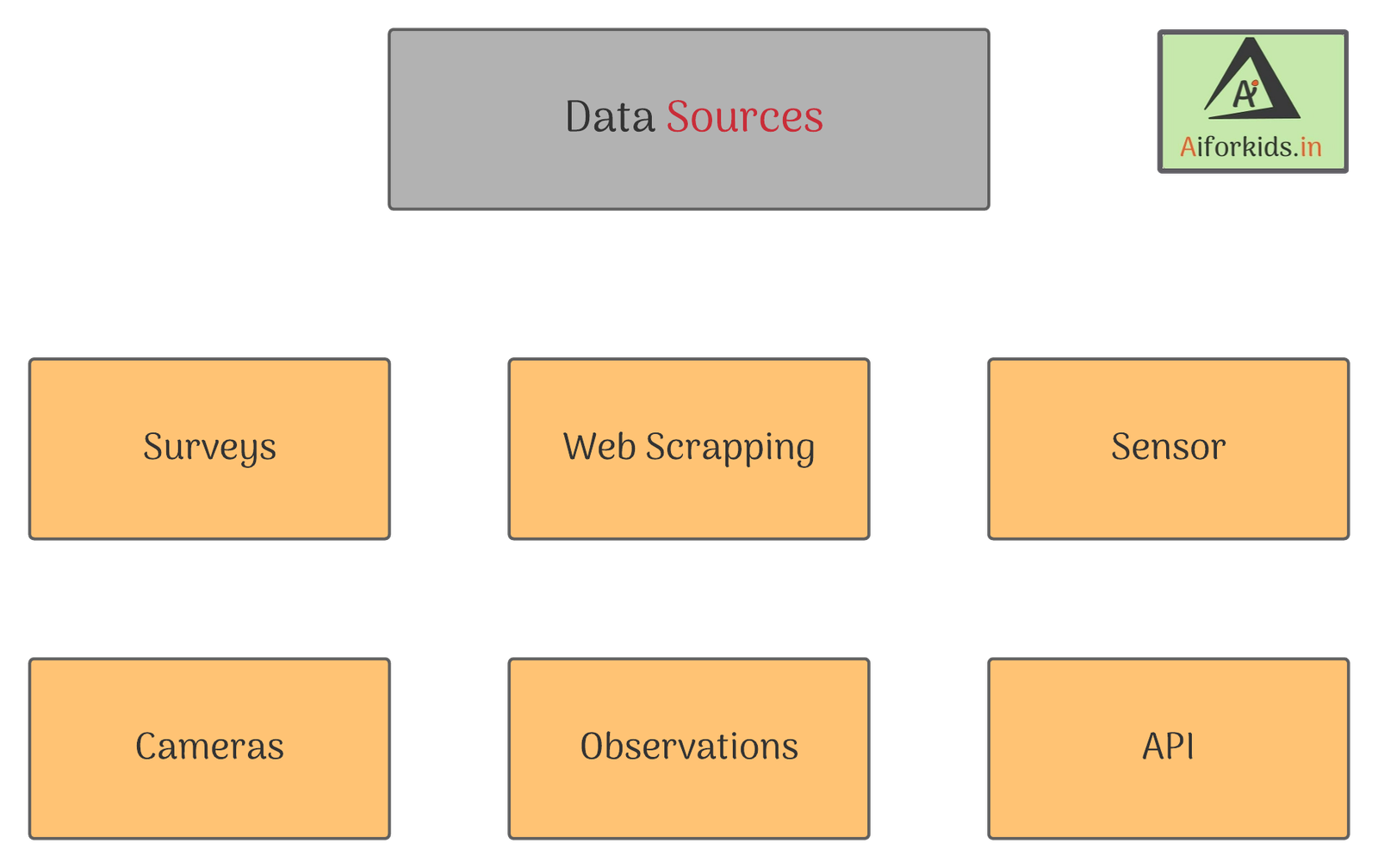

Data Sources

DataSets

DataSet is a collection of different kinds of Data.

For example :

If someone posts something one facebook he may get Likes, Shares, Dislikes , Comments etc. All of this is Data, This collection is one Dataset

There are two types of data sets :

| Training Dataset | Used for Training the Model ( 80% of the Data ). |

| Testing Dataset | Used for Testing the model ( 20% of the Data ) |

What is Information

Simply Information means more details about Data arranged in some format, Information is the processed, organized and structured data

Big Data

Big Data was originally associated with 3 key Concepts - Volume, Variety and Velocity

| Volume | Amount of Data Produced |

| Variety | Types of Data Produced |

| Velocity | Speed of Data Produced |

Big Data often includes data with sizes that exceed the capacity of traditional software to process within an acceptable time and value.

The term has been used since the 1990's with some giving credit to John Mashey.

Big Data usually includes data sets with sizes beyond the ability of commonly used software tools to Capture, Curate, Manage and process Data within a tolerable elapsed time.

Big Data philosophy and encompasses unstructured, semi-structured and even the structured data. However the main focus is on the unstructured data.

To know more about Data, Visit - Understanding Data

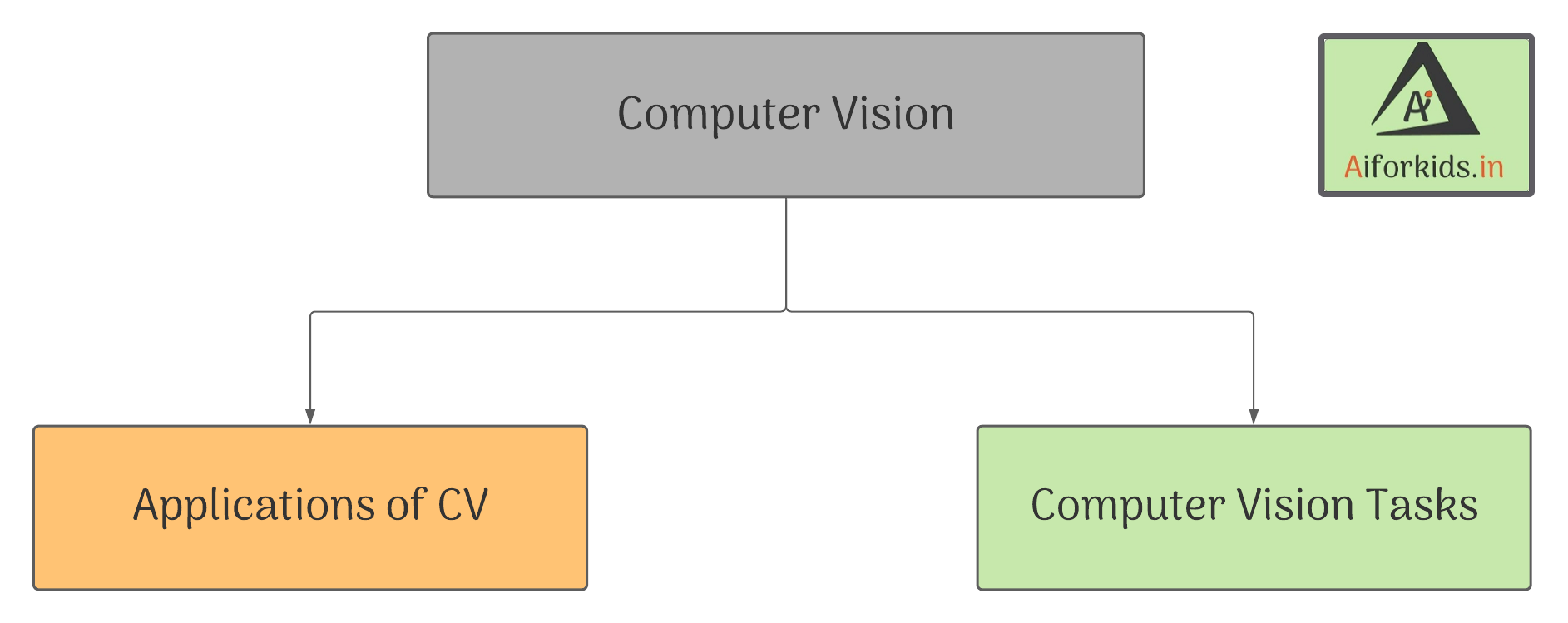

Computer Vision

Computer Vision in simple words is identifying the symbols from the given object (pictures) and learn the pattern and alert or predict the future object using the camera.

Goal of computer vision is to understand the content of digital images.

Applications of Computer vision

Computer vision was first introduced in 1970s and now it's applications are seen by everyone everywhere

- Facial recognition

- Face filter

- Google lens

- Retail stores

- Automotive

- Healthcare

- Google translate App

Facial recognition

A system is a technology which is capable of identifying or verifying a person from a digital image or a video from a video source. example:it is used by crime investigation agencies

Face filters

By using the applications like instagram and snapchat,we can click photographs of various themes,which are based on the usage of Computer vision.

Goggle lens

To search data,Google uses Computer vision for comparing different features of the input image to the database of images and then give us the search.

Retail stores

Newest and the most exciting application of Computer vision is the 'Amazon Go', it's an innovative retail store ,where there are no cashiers or checkout stations, it is a partially automated store which is created by utilizing computer vision, deep learning.

Watch this to learn more about amazon go technology

Automotive

Computer vision is also involved in the Automotive industry, companies like Tesla have developed self-driving cars which are going to rule the streets in the upcoming years. Automated cars are equipped with sensors and the software which can detect the 360 degrees of movements of pedestrians, cyclists, vehicles and road work.

Healthcare

Technology is helping the healthcare professionals accurately classifying the conditions and illness by reducing and eliminating any inaccurate diagnose and saving patient's lives.

Google translate App

Google translate is a free multilingual statistical and neural machine translation service which is provided by Google,to translate text and websites from one language to other language using the device camera.

Computer vision tasks

Applications of computer are based on a number of tasks that can be performed on the input image so as to perform Analysis or predict the output.

Image classification

Image classification is a task which helps to classify the input image from the set of predefined categories.

Classification + Localisation

It is the task which not only identify the input image but also the location of the image.

Object detection

It is the task to find the instance of an object in a an image and hence is known as the process of finding instances of real-world objects.

Instance segmentation

It can be called as a next step after the object detection . It is the process of detecting instances of objects.

Computer vision makes automatic checkout possible in modern in retail store as we learn about Retail stores .

Natural Language Processing

What is NLP ?

The ability of computer to understand human language (command) as spoken or written and to give a output by processing it, is called Natural Language Processing (NLP). It is component of Artificial Intelligence.

Lets understand it in simple words !

Now question yourself why you are able to talk with your friend ?..Because the words spoken by your friend is taken as input from your ears, your brain process them and as a output you respond and the most important thing the language you are using for communication is known to both of you. That's NLP in simple words !

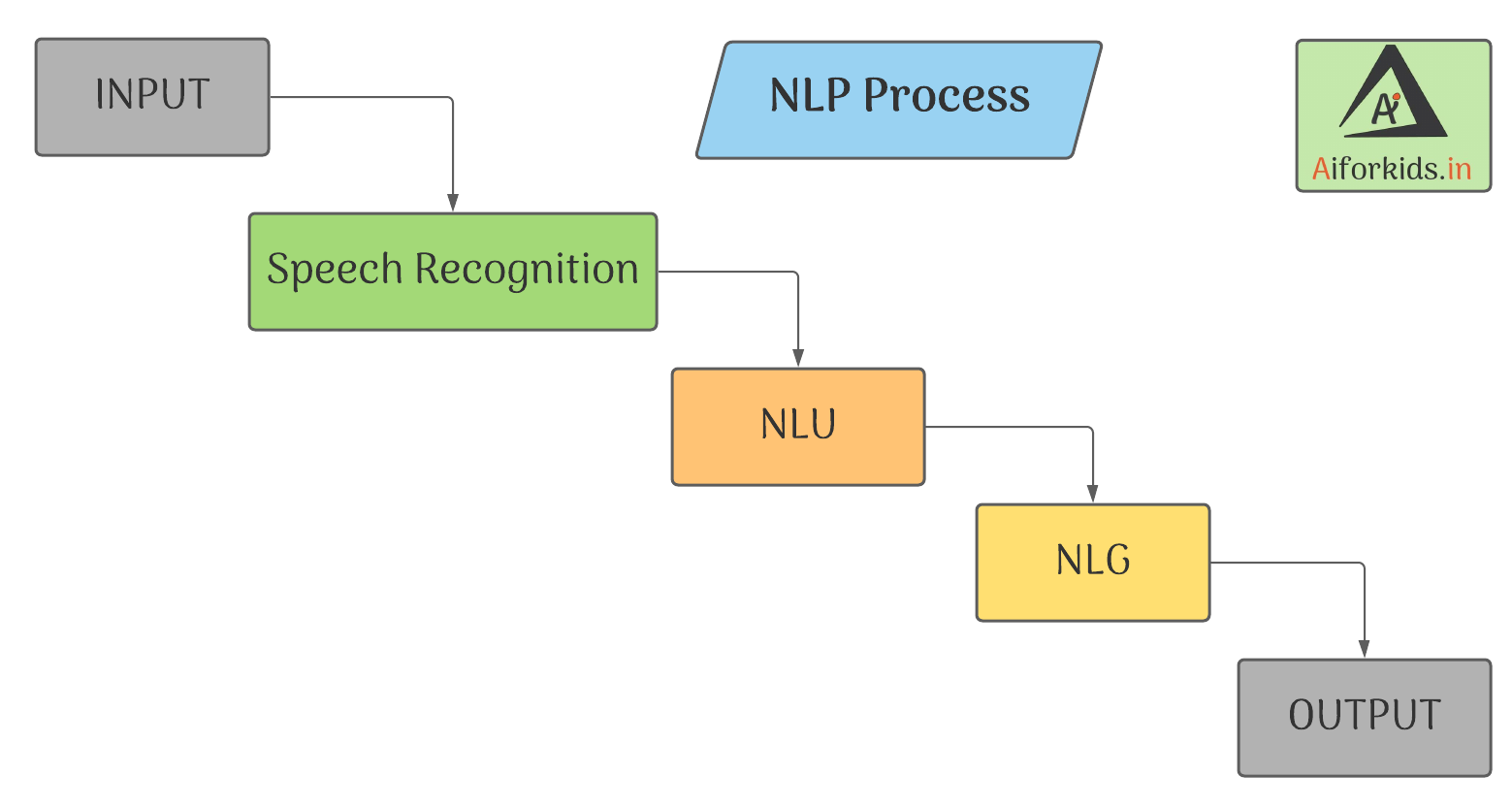

Process Of NLP

Collecting the information and understanding it, is called Natural Language Understanding (NLU).

Natural Language Understanding (NLU) uses algorithms to covert data spoken or written by the user into a structured data model. It does not required in-depth understanding of the input but have to deal with much larger vocabulary.

Two subtopics of NLU:

1. Intent recognition: It is most important part of NLU, because it is the process of identifying user's intention in the input and to establish the correct meaning.

2. Entity recognition: It is the process of extracting most important data in the input such as name, location, places, numbers, date,etc. For example: What happened at 9/11 (date) in USA (place)

Thinking of appropriate output, to feed the user is called Natural Language Generation (NLG).

Natural Language Generation is a software process of generating natural language output from a structured data-sets. It search for the most relevant output from its network and after arranging it and checking the grammatical mistakes it feeds the best result to the user.

Steps in NLG:

Content determination: showing only important information or summary for the user's speech

For example: Google shows only summary for a wikipedia search

Data interpretation: Identifying the correct data and to show it to the user with the context of the input.

For Example: In a Cricket match, winners, man of the match, run rate of the teams.

Sentence Aggregation: The selection of words or expressions in a output sentence. For Example: Selection between synonyms such as brilliant or fantastic.

Grammaticalization: As the name suggest, it insures the output must be according to grammatical patterns and does not have any grammatical mistake in it. For Example: Using of "," between the text

Language Implementation: Ensuring the output data according to the user's preferences and putting it into templates.

For Example: When you asked something to Google Assistant, the words or sentences spoken by you is taken as input from your microphone then the Google Assistant recognize your speech and understand it (NLU) then it thinks for a answer (NLG) and after it gives you most relevant ones (output) for your queries !

Applications of NLP

| Speech Recognition | Speech recognition is when a system is able to give output by understanding or interpreting user's speech as a input or a command. Used in: Google Assistant, Apple Siri, Amazon Alexa etc.. |

| Sentimental Analysis | Sentimental Analysis is a process of detecting bad and positive sentiments in a text. Used in: Youtube's violent or graphic content policies, review of products, identifying spam messages. |

| Machine Translations | Translations of text in a language to a another different language by machines. Used in: Google Translate, Youtube cc, Chatbot(s) etc. |

Challenges in NLP

NLP is considered as one of the Hard Problems in AI

Challenges in NLU

Lexical Ambiguity: Some words have multiple meaning, making it difficult for a machine to understand the correct user's intent.

For Example: Meaning of Tank could be: Domestic water tank or Army Tank

Syntactic Ambiguity: The structural understanding of the sentence taken by the machine must be valid.

Example: Old man and woman were taken to their home.

Here there is old only before men not in woman but the sentence means both were old

Semantic Ambiguity: The real meaning of the user's speech.

Example: The car hits pole while it was moving.

Here moving comes at end so it is difficult for a machine to understand who was actually hit, car or pole.

Pragmatic Ambiguity: Multiple interpenetration in user's speech.

Example: The Police are coming.

Here it is not given why the police are coming.

Challenges in NLG

1. It should be Intelligence and Conversational.

2. Deal with structured data

3. Text/Sentence planning

Important Links

| Class 9 Syllabus | Class 9 Worksheets |

| Class 9 Projects | Class 9 Quizzes |